Normal distribution

This article needs additional citations for verification. (December 2024) |

| Normal distribution | |||

|---|---|---|---|

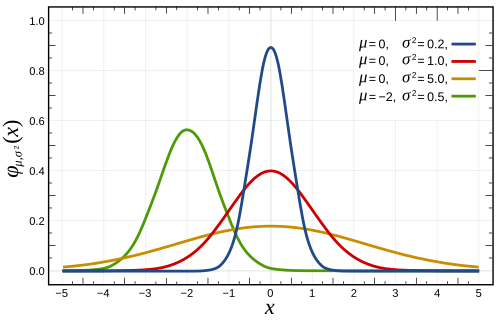

| Probability density function  The red curve is the standard normal distribution. | |||

| Cumulative distribution function  | |||

| Notation | |||

| Parameters | = mean (location) = variance (squared scale) | ||

| Support | |||

| CDF | |||

| Quantile | |||

| Mean | |||

| Median | |||

| Mode | |||

| Variance | |||

| MAD | |||

| AAD | |||

| Skewness | |||

| Excess kurtosis | |||

| Entropy | |||

| MGF | |||

| CF | |||

| Fisher information |

| ||

| Kullback–Leibler divergence | |||

| Expected shortfall | [1] | ||

| Part of a series on statistics |

| Probability theory |

|---|

|

In probability theory and statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is[2][3][4]

The parameter is the mean or expectation of the distribution (and also its median and mode), while the parameter is the variance. The standard deviation of the distribution is (sigma). A random variable with a Gaussian distribution is said to be normally distributed, and is called a normal deviate.

Normal distributions are important in statistics and are often used in the natural and social sciences to represent real-valued random variables whose distributions are not known.[5][6] Their importance is partly due to the central limit theorem. It states that, under some conditions, the average of many samples (observations) of a random variable with finite mean and variance is itself a random variable—whose distribution converges to a normal distribution as the number of samples increases. Therefore, physical quantities that are expected to be the sum of many independent processes, such as measurement errors, often have distributions that are nearly normal.[7]

Moreover, Gaussian distributions have some unique properties that are valuable in analytic studies. For instance, any linear combination of a fixed collection of independent normal deviates is a normal deviate. Many results and methods, such as propagation of uncertainty and least squares[8] parameter fitting, can be derived analytically in explicit form when the relevant variables are normally distributed.

A normal distribution is sometimes informally called a bell curve.[9][10] However, many other distributions are bell-shaped (such as the Cauchy, Student's t, and logistic distributions). (For other names, see Naming.)

The univariate probability distribution is generalized for vectors in the multivariate normal distribution and for matrices in the matrix normal distribution.

Definitions

[edit]Standard normal distribution

[edit]The simplest case of a normal distribution is known as the standard normal distribution or unit normal distribution. This is a special case when and , and it is described by this probability density function (or density):[11] The variable has a mean of 0 and a variance and standard deviation of 1. The density has its peak at and inflection points at and .

Although the density above is most commonly known as the standard normal, a few authors have used that term to describe other versions of the normal distribution. Carl Friedrich Gauss, for example, once defined the standard normal as which has a variance of , and Stephen Stigler[12] once defined the standard normal as which has a simple functional form and a variance of

General normal distribution

[edit]Every normal distribution is a version of the standard normal distribution, whose domain has been stretched by a factor (the standard deviation) and then translated by (the mean value):

The probability density must be scaled by so that the integral is still 1.

If is a standard normal deviate, then will have a normal distribution with expected value and standard deviation . This is equivalent to saying that the standard normal distribution can be scaled/stretched by a factor of and shifted by to yield a different normal distribution, called . Conversely, if is a normal deviate with parameters and , then this distribution can be re-scaled and shifted via the formula to convert it to the standard normal distribution. This variate is also called the standardized form of .

Notation

[edit]The probability density of the standard Gaussian distribution (standard normal distribution, with zero mean and unit variance) is often denoted with the Greek letter (phi).[13] The alternative form of the Greek letter phi, , is also used quite often.

The normal distribution is often referred to as or .[14] Thus when a random variable is normally distributed with mean and standard deviation , one may write

Alternative parameterizations

[edit]Some authors advocate using the precision as the parameter defining the width of the distribution, instead of the standard deviation or the variance . The precision is normally defined as the reciprocal of the variance, .[15] The formula for the distribution then becomes

This choice is claimed to have advantages in numerical computations when is very close to zero, and simplifies formulas in some contexts, such as in the Bayesian inference of variables with multivariate normal distribution.

Alternatively, the reciprocal of the standard deviation might be defined as the precision, in which case the expression of the normal distribution becomes

According to Stigler, this formulation is advantageous because of a much simpler and easier-to-remember formula, and simple approximate formulas for the quantiles of the distribution.

Normal distributions form an exponential family with natural parameters and , and natural statistics x and x2. The dual expectation parameters for normal distribution are η1 = μ and η2 = μ2 + σ2.

Cumulative distribution function

[edit]The cumulative distribution function (CDF) of the standard normal distribution, usually denoted with the capital Greek letter , is the integral

Error function

[edit]The related error function gives the probability of a random variable, with normal distribution of mean 0 and variance 1/2 falling in the range . That is:

These integrals cannot be expressed in terms of elementary functions, and are often said to be special functions. However, many numerical approximations are known; see below for more.

The two functions are closely related, namely

For a generic normal distribution with density , mean and variance , the cumulative distribution function is

The complement of the standard normal cumulative distribution function, , is often called the Q-function, especially in engineering texts.[16][17] It gives the probability that the value of a standard normal random variable will exceed : . Other definitions of the -function, all of which are simple transformations of , are also used occasionally.[18]

The graph of the standard normal cumulative distribution function has 2-fold rotational symmetry around the point (0,1/2); that is, . Its antiderivative (indefinite integral) can be expressed as follows:

The cumulative distribution function of the standard normal distribution can be expanded by integration by parts into a series:

where denotes the double factorial.

An asymptotic expansion of the cumulative distribution function for large x can also be derived using integration by parts. For more, see Error function § Asymptotic expansion.[19]

A quick approximation to the standard normal distribution's cumulative distribution function can be found by using a Taylor series approximation:

Recursive computation with Taylor series expansion

[edit]The recursive nature of the family of derivatives may be used to easily construct a rapidly converging Taylor series expansion using recursive entries about any point of known value of the distribution,:

where:

Using the Taylor series and Newton's method for the inverse function

[edit]An application for the above Taylor series expansion is to use Newton's method to reverse the computation. That is, if we have a value for the cumulative distribution function, , but do not know the x needed to obtain the , we can use Newton's method to find x, and use the Taylor series expansion above to minimize the number of computations. Newton's method is ideal to solve this problem because the first derivative of , which is an integral of the normal standard distribution, is the normal standard distribution, and is readily available to use in the Newton's method solution.

To solve, select a known approximate solution, , to the desired . may be a value from a distribution table, or an intelligent estimate followed by a computation of using any desired means to compute. Use this value of and the Taylor series expansion above to minimize computations.

Repeat the following process until the difference between the computed and the desired , which we will call , is below a chosen acceptably small error, such as 10−5, 10−15, etc.:

where

- is the from a Taylor series solution using and

When the repeated computations converge to an error below the chosen acceptably small value, x will be the value needed to obtain a of the desired value, .

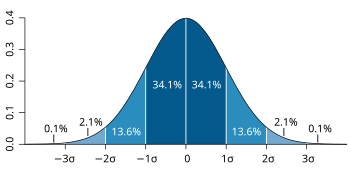

Standard deviation and coverage

[edit]

About 68% of values drawn from a normal distribution are within one standard deviation σ from the mean; about 95% of the values lie within two standard deviations; and about 99.7% are within three standard deviations.[9] This fact is known as the 68–95–99.7 (empirical) rule, or the 3-sigma rule.

More precisely, the probability that a normal deviate lies in the range between and is given by To 12 significant digits, the values for are:

| | OEIS | |||||

|---|---|---|---|---|---|---|

| 1 | 0.682689492137 | 0.317310507863 |

| OEIS: A178647 | ||

| 2 | 0.954499736104 | 0.045500263896 |

| OEIS: A110894 | ||

| 3 | 0.997300203937 | 0.002699796063 |

| OEIS: A270712 | ||

| 4 | 0.999936657516 | 0.000063342484 |

| |||

| 5 | 0.999999426697 | 0.000000573303 |

| |||

| 6 | 0.999999998027 | 0.000000001973 |

|

For large , one can use the approximation .

Quantile function

[edit]The quantile function of a distribution is the inverse of the cumulative distribution function. The quantile function of the standard normal distribution is called the probit function, and can be expressed in terms of the inverse error function: For a normal random variable with mean and variance , the quantile function is The quantile of the standard normal distribution is commonly denoted as . These values are used in hypothesis testing, construction of confidence intervals and Q–Q plots. A normal random variable will exceed with probability , and will lie outside the interval with probability . In particular, the quantile is 1.96; therefore a normal random variable will lie outside the interval in only 5% of cases.

The following table gives the quantile such that will lie in the range with a specified probability . These values are useful to determine tolerance interval for sample averages and other statistical estimators with normal (or asymptotically normal) distributions.[20] The following table shows , not as defined above.

| | | |||

|---|---|---|---|---|

| 0.80 | 1.281551565545 | 0.999 | 3.290526731492 | |

| 0.90 | 1.644853626951 | 0.9999 | 3.890591886413 | |

| 0.95 | 1.959963984540 | 0.99999 | 4.417173413469 | |

| 0.98 | 2.326347874041 | 0.999999 | 4.891638475699 | |

| 0.99 | 2.575829303549 | 0.9999999 | 5.326723886384 | |

| 0.995 | 2.807033768344 | 0.99999999 | 5.730728868236 | |

| 0.998 | 3.090232306168 | 0.999999999 | 6.109410204869 |

For small , the quantile function has the useful asymptotic expansion [citation needed]

Properties

[edit]The normal distribution is the only distribution whose cumulants beyond the first two (i.e., other than the mean and variance) are zero. It is also the continuous distribution with the maximum entropy for a specified mean and variance.[21][22] Geary has shown, assuming that the mean and variance are finite, that the normal distribution is the only distribution where the mean and variance calculated from a set of independent draws are independent of each other.[23][24]

The normal distribution is a subclass of the elliptical distributions. The normal distribution is symmetric about its mean, and is non-zero over the entire real line. As such it may not be a suitable model for variables that are inherently positive or strongly skewed, such as the weight of a person or the price of a share. Such variables may be better described by other distributions, such as the log-normal distribution or the Pareto distribution.

The value of the normal density is practically zero when the value lies more than a few standard deviations away from the mean (e.g., a spread of three standard deviations covers all but 0.27% of the total distribution). Therefore, it may not be an appropriate model when one expects a significant fraction of outliers—values that lie many standard deviations away from the mean—and least squares and other statistical inference methods that are optimal for normally distributed variables often become highly unreliable when applied to such data. In those cases, a more heavy-tailed distribution should be assumed and the appropriate robust statistical inference methods applied.

The Gaussian distribution belongs to the family of stable distributions which are the attractors of sums of independent, identically distributed distributions whether or not the mean or variance is finite. Except for the Gaussian which is a limiting case, all stable distributions have heavy tails and infinite variance. It is one of the few distributions that are stable and that have probability density functions that can be expressed analytically, the others being the Cauchy distribution and the Lévy distribution.

Symmetries and derivatives

[edit]The normal distribution with density (mean and variance ) has the following properties:

- It is symmetric around the point which is at the same time the mode, the median and the mean of the distribution.[25]

- It is unimodal: its first derivative is positive for negative for and zero only at

- The area bounded by the curve and the -axis is unity (i.e. equal to one).

- Its first derivative is

- Its second derivative is

- Its density has two inflection points (where the second derivative of is zero and changes sign), located one standard deviation away from the mean, namely at and [25]

- Its density is log-concave.[25]

- Its density is infinitely differentiable, indeed supersmooth of order 2.[26]

Furthermore, the density of the standard normal distribution (i.e. and ) also has the following properties:

- Its first derivative is

- Its second derivative is

- More generally, its nth derivative is where is the nth (probabilist) Hermite polynomial.[27]

- The probability that a normally distributed variable with known and is in a particular set, can be calculated by using the fact that the fraction has a standard normal distribution.

Moments

[edit]The plain and absolute moments of a variable are the expected values of and , respectively. If the expected value of is zero, these parameters are called central moments; otherwise, these parameters are called non-central moments. Usually we are interested only in moments with integer order .

If has a normal distribution, the non-central moments exist and are finite for any whose real part is greater than −1. For any non-negative integer , the plain central moments are:[28] Here denotes the double factorial, that is, the product of all numbers from to 1 that have the same parity as

The central absolute moments coincide with plain moments for all even orders, but are nonzero for odd orders. For any non-negative integer

The last formula is valid also for any non-integer When the mean the plain and absolute moments can be expressed in terms of confluent hypergeometric functions and [29]

These expressions remain valid even if is not an integer. See also generalized Hermite polynomials.

| Order | Non-central moment, | Central moment, |

|---|---|---|

| 1 | | |

| 2 | ||

| 3 | | |

| 4 | ||

| 5 | | |

| 6 | ||

| 7 | | |

| 8 |

The expectation of conditioned on the event that lies in an interval is given by where and respectively are the density and the cumulative distribution function of . For this is known as the inverse Mills ratio. Note that above, density of is used instead of standard normal density as in inverse Mills ratio, so here we have instead of .

Fourier transform and characteristic function

[edit]The Fourier transform of a normal density with mean and variance is[30]

where is the imaginary unit. If the mean , the first factor is 1, and the Fourier transform is, apart from a constant factor, a normal density on the frequency domain, with mean 0 and variance . In particular, the standard normal distribution is an eigenfunction of the Fourier transform.

In probability theory, the Fourier transform of the probability distribution of a real-valued random variable is closely connected to the characteristic function of that variable, which is defined as the expected value of , as a function of the real variable (the frequency parameter of the Fourier transform). This definition can be analytically extended to a complex-value variable .[31] The relation between both is:

Moment- and cumulant-generating functions

[edit]The moment generating function of a real random variable is the expected value of , as a function of the real parameter . For a normal distribution with density , mean and variance , the moment generating function exists and is equal to

For any , the coefficient of in the moment generating function (expressed as an exponential power series in ) is the normal distribution's expected value .

The cumulant generating function is the logarithm of the moment generating function, namely

The coefficients of this exponential power series define the cumulants, but because this is a quadratic polynomial in , only the first two cumulants are nonzero, namely the mean and the variance .

Some authors prefer to instead work with the characteristic function E[eitX] = eiμt − σ2t2/2 and ln E[eitX] = iμt − 1/2σ2t2.

Stein operator and class

[edit]Within Stein's method the Stein operator and class of a random variable are and the class of all absolutely continuous functions such that .

Zero-variance limit

[edit]In the limit when approaches zero, the probability density approaches zero everywhere except at , where it approaches , while its integral remains equal to 1. An extension of the normal distribution to the case with zero variance can be defined using the Dirac delta measure , although the resulting random variables are not absolutely continuous and thus do not have probability density functions. The cumulative distribution function of such a random variable is then the Heaviside step function translated by the mean , namely

Maximum entropy

[edit]Of all probability distributions over the reals with a specified finite mean and finite variance , the normal distribution is the one with maximum entropy.[21] To see this, let be a continuous random variable with probability density . The entropy of is defined as[32][33][34]

where is understood to be zero whenever . This functional can be maximized, subject to the constraints that the distribution is properly normalized and has a specified mean and variance, by using variational calculus. A function with three Lagrange multipliers is defined:

At maximum entropy, a small variation about will produce a variation about which is equal to 0:

Since this must hold for any small , the factor multiplying must be zero, and solving for yields:

The Lagrange constraints that is properly normalized and has the specified mean and variance are satisfied if and only if , , and are chosen so that The entropy of a normal distribution is equal to which is independent of the mean .

Other properties

[edit]- If the characteristic function of some random variable is of the form in a neighborhood of zero, where is a polynomial, then the Marcinkiewicz theorem (named after Józef Marcinkiewicz) asserts that can be at most a quadratic polynomial, and therefore is a normal random variable.[35] The consequence of this result is that the normal distribution is the only distribution with a finite number (two) of non-zero cumulants.

- If and are jointly normal and uncorrelated, then they are independent. The requirement that and should be jointly normal is essential; without it the property does not hold.[36][37][proof] For non-normal random variables uncorrelatedness does not imply independence.

- The Kullback–Leibler divergence of one normal distribution from another is given by:[38] The Hellinger distance between the same distributions is equal to

- The Fisher information matrix for a normal distribution w.r.t. and is diagonal and takes the form

- The conjugate prior of the mean of a normal distribution is another normal distribution.[39] Specifically, if are iid and the prior is , then the posterior distribution for the estimator of will be

- The family of normal distributions not only forms an exponential family (EF), but in fact forms a natural exponential family (NEF) with quadratic variance function (NEF-QVF). Many properties of normal distributions generalize to properties of NEF-QVF distributions, NEF distributions, or EF distributions generally. NEF-QVF distributions comprises 6 families, including Poisson, Gamma, binomial, and negative binomial distributions, while many of the common families studied in probability and statistics are NEF or EF.

- In information geometry, the family of normal distributions forms a statistical manifold with constant curvature . The same family is flat with respect to the (±1)-connections and .[40]

- If are distributed according to , then . Note that there is no assumption of independence.[41]

Related distributions

[edit]Central limit theorem

[edit]

The central limit theorem states that under certain (fairly common) conditions, the sum of many random variables will have an approximately normal distribution. More specifically, where are independent and identically distributed random variables with the same arbitrary distribution, zero mean, and variance and is their mean scaled by

French

French Deutsch

Deutsch

![{\displaystyle \Phi \left({\frac {x-\mu }{\sigma }}\right)={\frac {1}{2}}\left[1+\operatorname {erf} \left({\frac {x-\mu }{\sigma {\sqrt {2}}}}\right)\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c0fed43e25966344745178c406f04b15d0fa3783)

![{\displaystyle [-x,x]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e23c41ff0bd6f01a0e27054c2b85819fcd08b762)

![{\displaystyle \Phi (x)={\frac {1}{2}}\left[1+\operatorname {erf} \left({\frac {x}{\sqrt {2}}}\right)\right]\,.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4dec6de19ebeaa734086622f167d87ac41e7b50d)

![{\displaystyle F(x)=\Phi {\left({\frac {x-\mu }{\sigma }}\right)}={\frac {1}{2}}\left[1+\operatorname {erf} \left({\frac {x-\mu }{\sigma {\sqrt {2}}}}\right)\right]\,.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/eabefd31d90af5221d89c397c7caef45a419b2bd)

![{\displaystyle \Phi (x)={\frac {1}{2}}+{\frac {1}{\sqrt {2\pi }}}\cdot e^{-x^{2}/2}\left[x+{\frac {x^{3}}{3}}+{\frac {x^{5}}{3\cdot 5}}+\cdots +{\frac {x^{2n+1}}{(2n+1)!!}}+\cdots \right]\,.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ad8275ec6180f68c2dae03c9e70716b75ff4f3aa)

![{\displaystyle \operatorname {E} \left[(X-\mu )^{p}\right]={\begin{cases}0&{\text{if }}p{\text{ is odd,}}\\\sigma ^{p}(p-1)!!&{\text{if }}p{\text{ is even.}}\end{cases}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f1d2c92b62ac2bbe07a8e475faac29c8cc5f7755)

![{\displaystyle {\begin{aligned}\operatorname {E} \left[|X-\mu |^{p}\right]&=\sigma ^{p}(p-1)!!\cdot {\begin{cases}{\sqrt {\frac {2}{\pi }}}&{\text{if }}p{\text{ is odd}}\\1&{\text{if }}p{\text{ is even}}\end{cases}}\\&=\sigma ^{p}\cdot {\frac {2^{p/2}\Gamma \left({\frac {p+1}{2}}\right)}{\sqrt {\pi }}}.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3b196371c491676efa7ea7770ef56773db7652cd)

![{\displaystyle {\begin{aligned}\operatorname {E} \left[X^{p}\right]&=\sigma ^{p}\cdot {\left(-i{\sqrt {2}}\right)}^{p}\,U{\left(-{\frac {p}{2}},{\frac {1}{2}},-{\frac {\mu ^{2}}{2\sigma ^{2}}}\right)},\\\operatorname {E} \left[|X|^{p}\right]&=\sigma ^{p}\cdot 2^{p/2}{\frac {\Gamma {\left({\frac {1+p}{2}}\right)}}{\sqrt {\pi }}}\,{}_{1}F_{1}{\left(-{\frac {p}{2}},{\frac {1}{2}},-{\frac {\mu ^{2}}{2\sigma ^{2}}}\right)}.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7c1a76b43032d0b01ff1935cced240253263ec62)

![{\displaystyle \operatorname {E} \left[X^{p}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/53264b5ab94e93f2e7de05de69012af27df4c4f4)

![{\displaystyle \operatorname {E} \left[(X-\mu )^{p}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/598b838fed4500017abb513a203a89999c74ce22)

![{\textstyle [a,b]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2c780cbaafb5b1d4a6912aa65d2b0b1982097108)

![{\displaystyle \operatorname {E} \left[X\mid a<X<b\right]=\mu -\sigma ^{2}{\frac {f(b)-f(a)}{F(b)-F(a)}}\,,}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ad97cc40960e6d1d4e65f51596c9cd0c9accfdc0)

![{\displaystyle M(t)=\operatorname {E} \left[e^{tX}\right]={\hat {f}}(it)=e^{\mu t}e^{\sigma ^{2}t^{2}/2}\,.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3b5930b107fb4328bc04d077d65ce3d2bf1510de)

![{\displaystyle \operatorname {E} [X^{k}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9893a5b728d8111751abbcf6bd653d214b462cdc)

![{\displaystyle \operatorname {E} [\vert f'(X)\vert ]<\infty }](https://wikimedia.org/api/rest_v1/media/math/render/svg/c172f331ab4cca02c7e06a7322b7832f082e717b)

![{\textstyle E[\max _{i}X_{i}]\leq \sigma {\sqrt {2\ln n}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0dfb87c9b047ccf23ace2139d97810dff1ed6670)